Updated 24 Mar 2025 • 7 mins read

Managed Kubernetes platforms in 2026: Top providers compared

Khushi Dubey

Author

Table of Content

Cloud infrastructure in 2026 looks very different from what it did only a few years ago. Kubernetes has evolved from a complex orchestration tool used by specialized DevOps teams into the operational backbone of modern applications. From AI training pipelines to real-time media services, organizations now rely on Kubernetes clusters to manage massive volumes of containers across cloud, hybrid, and edge environments.

Managed Kubernetes has become a core layer of modern cloud infrastructure. Instead of managing clusters manually, organizations now rely on cloud providers to operate the control plane, automate scaling, and maintain reliability, allowing engineering teams to focus on building products.

This article explores how managed Kubernetes has evolved and highlights the leading platforms shaping the ecosystem in 2026, helping teams choose the option that best fits their workloads, budget, and operational needs.

What is managed Kubernetes?

Managed Kubernetes is a service model in which a cloud provider operates and maintains the Kubernetes control plane, while users focus on deploying and managing applications. The control plane includes critical components such as the Kubernetes API server, scheduler, controller manager, and etcd, a database that stores cluster state.

In a traditional self-managed setup, organizations must provision infrastructure, configure networking, maintain certificates, upgrade cluster versions, and ensure high availability across multiple zones. These tasks require specialized knowledge and constant maintenance.

Managed Kubernetes removes most of that operational burden. The provider ensures that the control plane remains available, secure, and up to date. Many services now also automate worker node lifecycle management, patching, scaling, and monitoring. As a result, engineering teams can deploy workloads quickly while relying on the provider for operational stability.

This model has become the preferred approach because maintaining Kubernetes clusters internally often consumes significant engineering time without delivering direct business value.

Requirements for managed Kubernetes in 2026

Choosing a managed Kubernetes platform requires evaluating several architectural and operational capabilities. The needs of modern workloads have expanded far beyond simple container deployment.

A reliable managed Kubernetes platform must support scalable compute, advanced networking, AI hardware integration, and enterprise-grade security. Organizations must also consider cost transparency, observability tools, and multi-cluster orchestration.

Key requirements typically include:

- High availability control plane that runs across multiple availability zones

- Automated node scaling for dynamic workload changes

- Integrated networking and load balancing within cloud virtual networks

- Native GPU and accelerator support for AI and machine learning workloads

- Strong identity and access management with role-based access control

- Automated upgrades and security patching to maintain cluster health

- Cost monitoring and resource optimization tools for FinOps visibility

- Multi-cluster management capabilities for large distributed systems

Once these core capabilities are in place, organizations can evaluate specific cloud providers that offer managed Kubernetes services.

The following sections explore several major platforms that currently dominate the managed Kubernetes landscape.

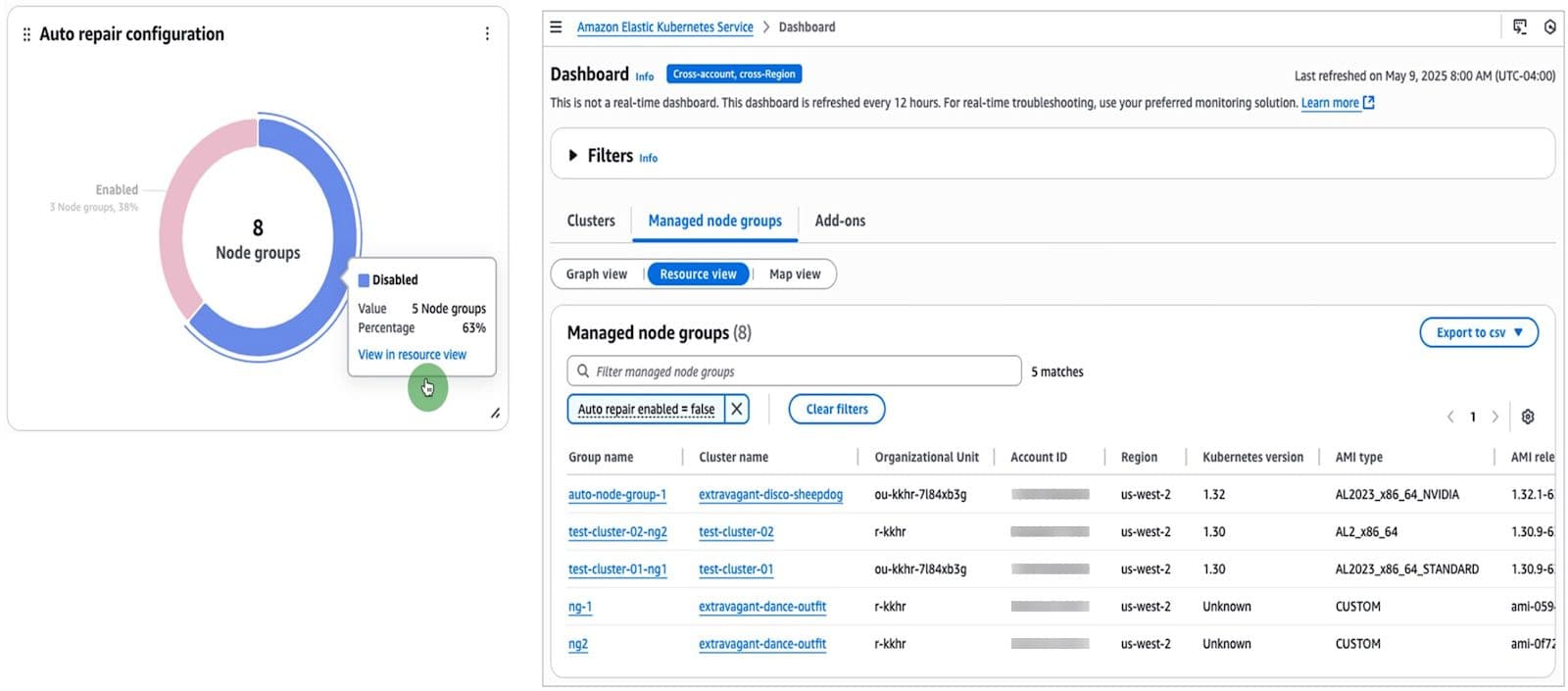

AWS Elastic Kubernetes Service (EKS)

AWS EKS Dashboard showing managed node group health and auto-repair configurations.

Amazon Elastic Kubernetes Service remains one of the most widely adopted Kubernetes platforms. Its popularity largely comes from deep integration with the broader AWS ecosystem and the ability to connect seamlessly with services such as IAM, EC2, S3, and CloudWatch. For organizations already running workloads on AWS, EKS provides a natural extension into container orchestration.

The platform has matured significantly with features like Auto Mode and managed node groups. These capabilities simplify infrastructure management and reduce the operational complexity traditionally associated with Kubernetes clusters.

Key capabilities include:

- Fully managed Kubernetes control plane across multiple availability zones

- Deep integration with AWS IAM for granular security control

- Managed node groups for automated node lifecycle management

- EKS Auto Mode for simplified infrastructure provisioning

- Extensive GPU support for AI and machine learning workloads

EKS works particularly well for enterprises already invested in the AWS ecosystem. Its flexibility and ecosystem integration make it suitable for large scale cloud native deployments.

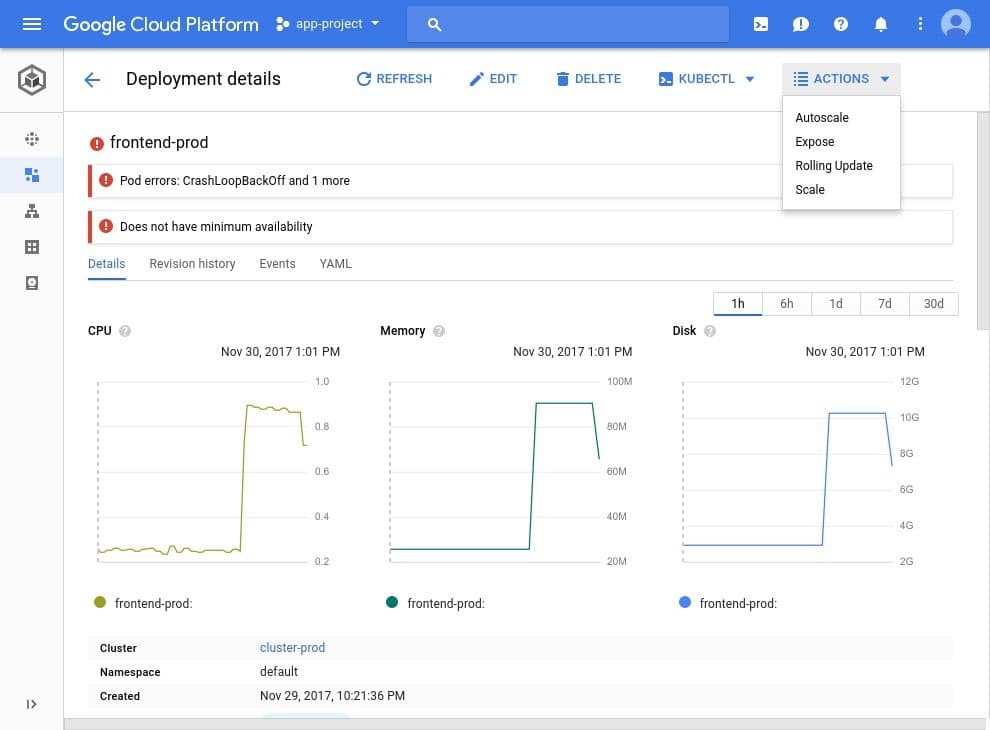

Google Kubernetes Engine (GKE)

Google Kubernetes Engine deployment details interface featuring CPU, Memory, and Disk utilization metrics.

Google Kubernetes Engine is often considered the most technically mature managed Kubernetes platform. Since Kubernetes was originally developed by Google, GKE benefits from deep operational expertise and early access to new orchestration capabilities.

One of its defining features is Autopilot mode, which abstracts node management entirely. Developers simply define resource requirements for pods while Google manages cluster infrastructure, security configurations, and scaling policies.

Important capabilities include:

- Autopilot mode that eliminates node management entirely

- Native integration with Google Cloud AI and Vertex AI services

- Advanced networking performance through Google's global infrastructure

- Strong support for TPUs and high-end GPU accelerators

- Built-in observability tools such as Cloud Monitoring and Logging

GKE is particularly attractive for organizations running data-intensive or AI-focused workloads. Its automation and infrastructure performance make it one of the most advanced Kubernetes platforms available.

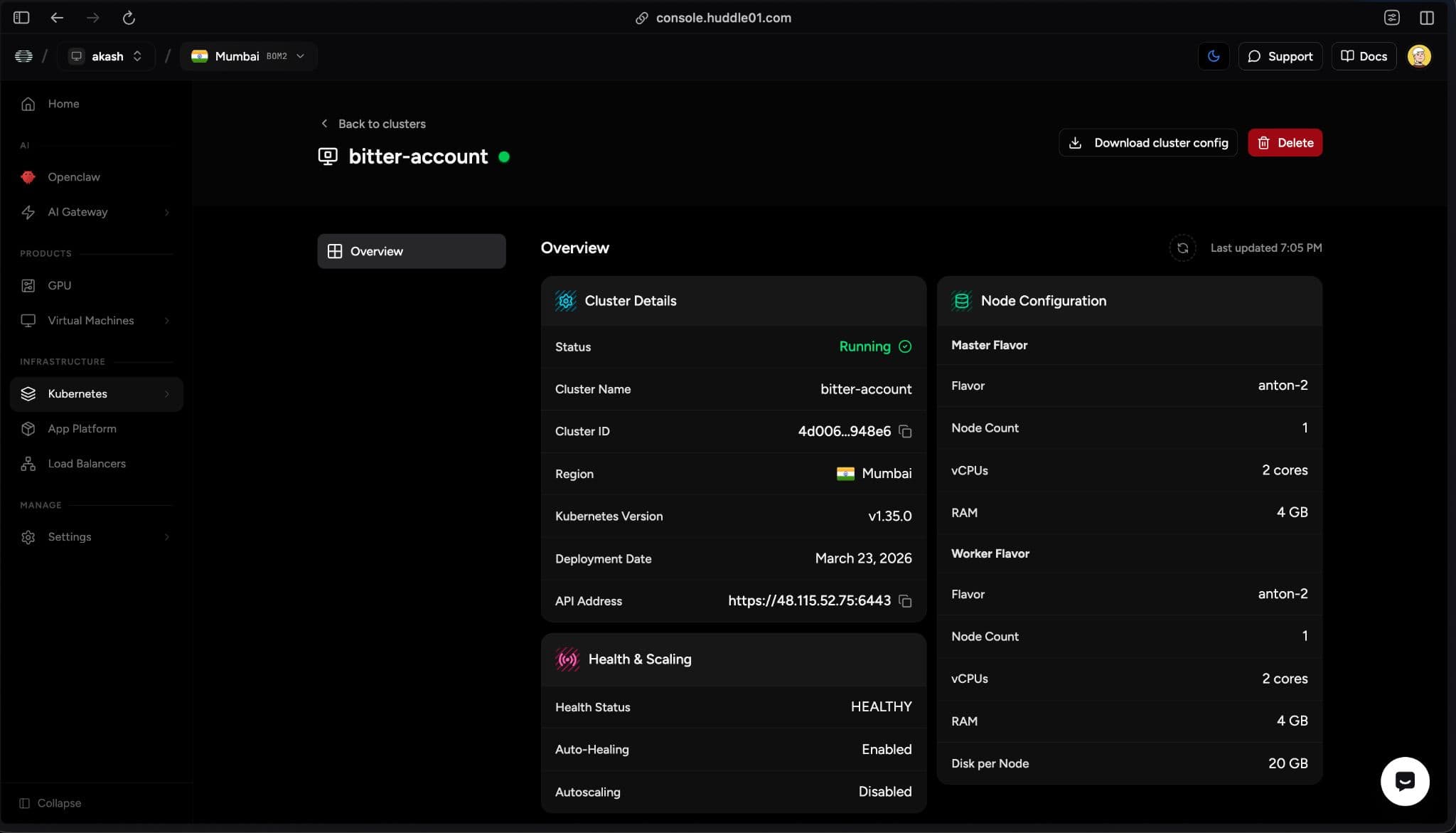

Huddle01 Cloud

Huddle01 Cloud Dashboard

Huddle01 Cloud is a high-performance managed Kubernetes platform built on dedicated AMD EPYC Zen 4 infrastructure with unthrottled NVMe storage. Unlike hyperscalers, it avoids shared CPUs and throttling mechanisms. Each node delivers consistent compute without CPU credits or IOPS limits. This ensures stable performance even during heavy workloads.

Benchmark results show strong gains over AWS in sustained workloads. It offers up to 82% higher throughput, 5x more IOPS, and 7x lower latency. CI/CD pipelines run about 50% faster without performance drops. The dedicated hardware ensures reliability beyond burst-based systems.

For a deeper understanding of its Kubernetes infrastructure and architecture, refer to the official Kubernetes documentation.

- Dedicated AMD EPYC Zen 4 compute with DDR5 memory

- Unthrottled NVMe storage with no IOPS limits

- Flexible networking with custom CNI support

- Simple deployment with built-in load balancing

Huddle01 Cloud is ideal for compute-heavy tasks like CI/CD, databases, media processing, and AI inference. It suits teams that prioritize consistent performance over a wide range of managed services. Its cost efficiency and reliability make it a strong alternative to traditional hyperscalers.

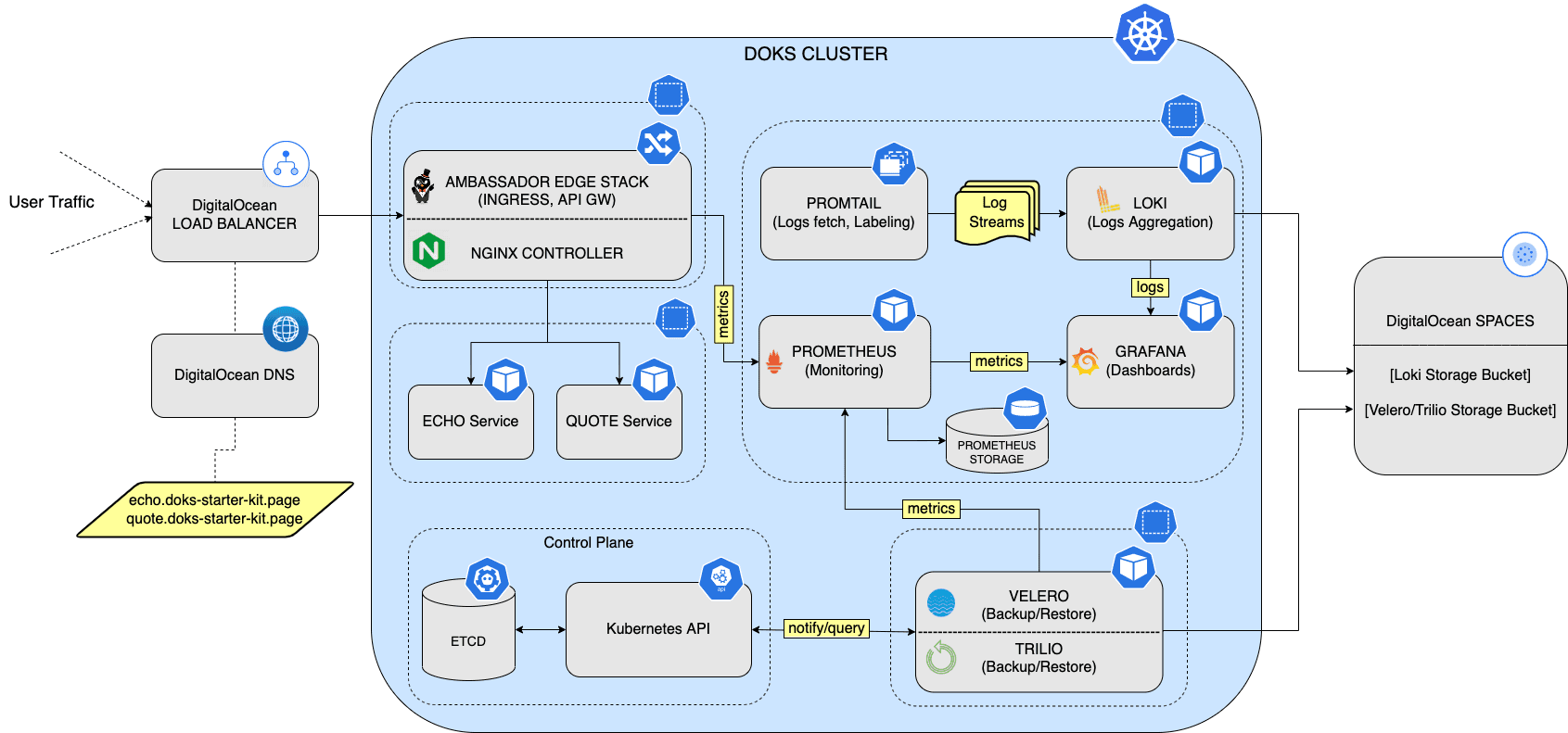

DigitalOcean Kubernetes (DOKS)

DigitalOcean Kubernetes (DOKS) Architecture

DigitalOcean Kubernetes focuses on simplicity and developer experience. The platform is designed for startups, independent developers, and small engineering teams that need container orchestration without the complexity often associated with hyperscale cloud platforms.

Clusters can be deployed quickly using a straightforward interface, and the platform emphasizes predictable pricing and clear infrastructure management. This simplicity makes it attractive for early-stage companies and rapid product development.

Key features include:

- Free managed control plane for Kubernetes clusters

- Simple cluster deployment with minimal configuration steps

- Transparent pricing with included bandwidth allowances

- Integrated load balancers and block storage support

- Clean and intuitive management dashboard for developers

DigitalOcean Kubernetes is ideal for teams that prioritize ease of use and predictable infrastructure costs. It provides enough capability for production workloads while keeping operational complexity low.

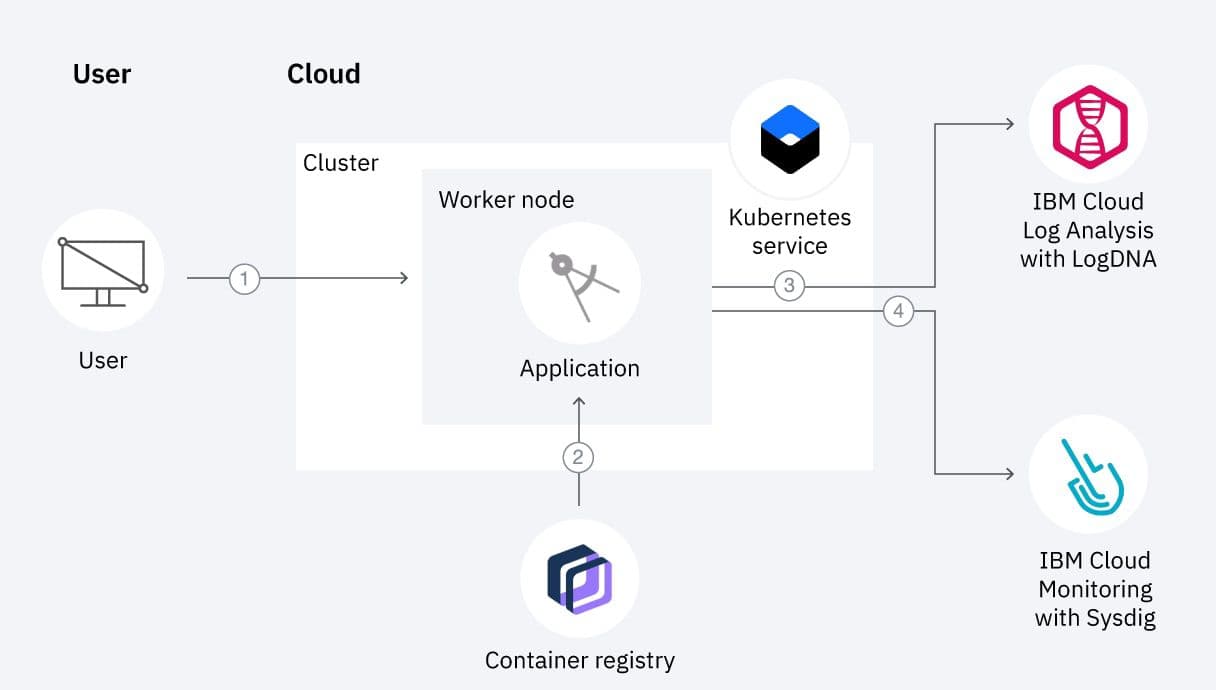

IBM Cloud Kubernetes Service (IKS)

Workflow diagram of IBM Cloud Kubernetes Service showing integration with LogDNA and Sysdig monitoring.

IBM Cloud Kubernetes Service is designed primarily for enterprise organizations operating in regulated industries or requiring hybrid cloud architectures. IBM focuses heavily on integrating Kubernetes with enterprise systems and on-premises infrastructure.

The platform also integrates closely with Red Hat OpenShift, allowing organizations to maintain consistent container orchestration environments across private data centers and public clouds.

Key capabilities include:

- Deep integration with Red Hat OpenShift for hybrid cloud environments

- Enterprise security and compliance features for regulated industries

- Integration with IBM WatsonX AI services for data-driven applications

- Support for large-scale GPU-based machine learning workloads

- Advanced storage and data management capabilities for stateful systems

IBM Cloud Kubernetes Service is particularly suitable for large enterprises with strict governance requirements. Its hybrid cloud strategy enables organizations to modernize infrastructure without abandoning legacy systems.

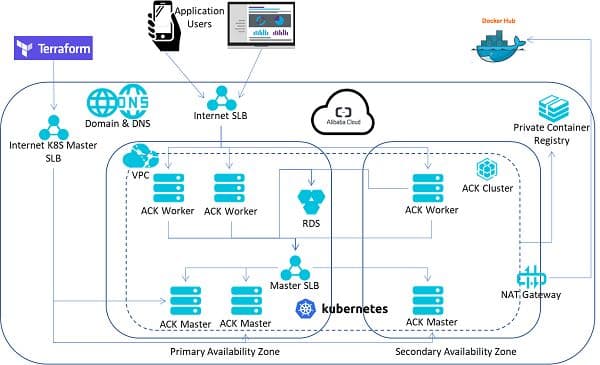

Alibaba Cloud Container Service for Kubernetes (ACK)

Network topology of Alibaba Cloud Container Service (ACK) across primary and secondary availability zones using Terraform.

Alibaba Cloud Container Service for Kubernetes dominates much of the Asia Pacific cloud infrastructure market. It offers a highly scalable orchestration platform designed to support extremely large workloads, particularly those related to artificial intelligence.

The platform integrates tightly with Alibaba’s data processing ecosystem and includes specialized optimizations for distributed machine learning workloads.

Important capabilities include:

- Highly scalable Kubernetes clusters designed for massive workloads

- Advanced GPU scheduling for AI training and inference tasks

- Multi-cluster orchestration through ACK One management layer

- Optimized networking for high-throughput distributed computing

- Integrated observability and monitoring tools for large clusters

ACK is especially strong in regions where Alibaba Cloud infrastructure is widely deployed. Its AI optimizations and large-scale orchestration capabilities make it attractive for data-heavy applications.

Conclusion

Managed Kubernetes has become a core component of modern cloud infrastructure. Instead of managing clusters and control plane operations themselves, engineering teams now rely on cloud providers to handle orchestration, scaling, and reliability.

The ideal platform depends on workload requirements and operational priorities. While hyperscalers like AWS and Google offer powerful ecosystems, newer providers and developer-focused platforms emphasize lower costs, reduced latency, and simpler operations.

As container orchestration evolves, Kubernetes is increasingly becoming invisible infrastructure, quietly managing scaling and networking while developers focus on building and deploying applications.